As CloudNation we experience that a lot of companies are struggling where to start when they want to use Kubernetes. More and more developers request a Kubernetes cluster to deploy their container workload, this leaves IT department to figure out where to start. And unfortunately, it isn’t that simple, the learning curve is high and the amount of change happening to the platform is also high.

A short history of Kubernetes and AKS

In the past few years, it’s clear that Kubernetes is a platform here to stay. In 2014 Google open-source Kubernetes as the Open Source version of Borg. Google who’s been working with containers for years hosting Google’s search engine, Gmail and many more of their service on containers orchestrated by Borg. All the things Google learned in working with borg is the knowledge they included in Kubernetes.

This differentiated it from other offerings at the time. Only a few months later Microsoft, RedHat, IBM, Docker join the Kubernetes community in order to improve the product. The following years more companies join the community and many engineers are building and refining Kubernetes to a full-blown enterprise-ready container orchestration platform.

The Cloud providers are quick to notice the growing request of Kubernetes and Microsoft is no exception. On the 24 of October 2017 Microsoft announces a preview of Azure Kubernetes Service (AKS), this meant a Managed Kubernetes service hosted on Azure.

Since then, AKS has shown the largest growth in usage as one of the new services in the last few years. Microsoft has been steadily improving the AKS service and is even one of the biggest contributors to upstream Kubernetes. Now it’s easy to get a cluster up in running within Azure with the click of a button.

Using AKS makes Kubernetes easy, right?

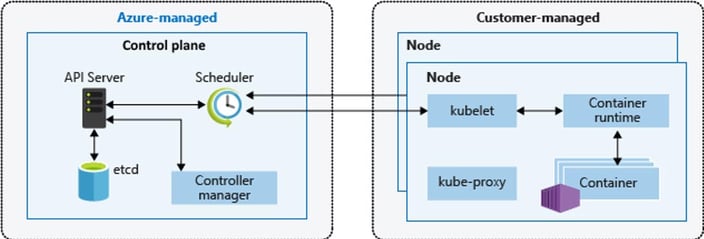

Azure takes care of the control plane of Kubernetes within Azure. This is completely free of charge. As a customer, you are responsible for the nodes of your Kubernetes cluster and you only pay for the compute of these nodes.

Here also lies the challenge, during deployment the nodes function out of the box and automatically start communicating to the control plane. But after that, you are responsible for the health of these nodes and the application running on them.

Here are my tips to start with:

01. Keep your AKS cluster healthy

So now you have an AKS cluster. What do you need to take care of to keep the cluster up and running? Within AKS it’s possible to easily scale the cluster and add extra nodes. The challenge lies in getting the right VM type. It can mean the difference between a happy cluster or one that comes crashing down after the first workload has been deployed.

Some tips to keep in mind when designing the cluster:

- Have at least 3 nodes as minimum to keep the cluster resilient.

- Specify the correct type of nodes the workload needs. Is it CPU, Memory heavy?

- Think about the right storage needs of the cluster node.

02. Update your Kubernetes version regularly

Besides the right size of the cluster, the Kubernetes Cluster runtime needs to be updated “manually”. The Kubernetes updates aren’t automatically applied, as a customer you are required to pick a time to update your cluster.

Initially you will update your cluster in the sense of security. Secondly, you need to keep in mind that Microsoft Azure only provides support for a select amount of latest Kubernetes versions. Staying in this range is crucial if you’d like/need support from Microsoft in the future. But updating might impact the workload and tools that are deployed on the cluster.

Luckily AKS can upgrade your cluster with a click of a button. However the challenge lies in that it’s not possible to downgrade to a lower version at the moment. If you haven’t checked if the upgrade might impact your workload this might result in downtime.

03. Security updates for your nodes

Security updates of the underlying node infrastructure are also important. Azure takes care of updating the nodes for you. However, you need to reboot the nodes yourself. Not taking care of these reboots means the nodes have pending reboots and no active security updates.

One of the Tools that helps you with this is Kured. It checks if nodes have pending reboots and reboots them if needed. You are able to specify how many at the same time and during which time frames it’s allowed.

04. Secure your containers

Besides the security of the nodes, it’s your own responsibility to secure the container workload on AKS. By default, there is no security for containers running on the cluster, which could potentially allow containers to break your cluster. For example, it’s possible to deploy a container with too many rights or with vulnerabilities in the container which are then exposed to the outside. You can mitigate this with a solution like Falco. These tools will help you to identify incidents like:

- A shell is running inside a container.

- A container is running in privileged mode or is mounting a sensitive path, such as /proc, from the host.

- A server process is spawning a child process of an unexpected type.

- Unexpected read of a sensitive file, such as /etc/shadow.

- A non-device file is written to /dev.

- A standard system binary, such as ls, is making an outbound network connection.

There are also a lot of things you can do before the container reaches the cluster. But that’s a topic for another time.

05. Reverse proxy or application firewall

You also need to think about how you’d like to expose these container workloads. It’s possible to containerize your own reverse proxy like NGINX and add in security like OWASP to secure these. But you could also choose to use Azure Web Application Firewall integrated with the custom Ingress controller. Both have their pros and cons.

With running you own NGINX container you have a more finegrained control on settings an configrations however this might be more complex in the long run. Definitely when running it with OWASP plugin.

Running an Azure Web Application Firewall as an PaaS service offloads some of the complexity to the Azure platform. However you need to run an extra service on your cluster in order for it to communicate to the WAF.

06. Balancing the cluster

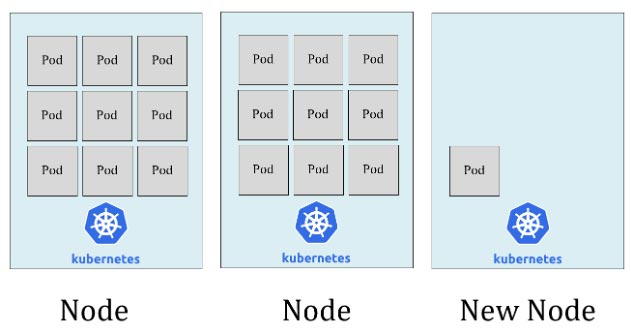

Normally the Kubernetes scheduler takes care of making sure that all the pods that are requested are deployed to available nodes. During this deployment the scheduler checks for nodes for multiple things in order to decide what is the best Node. After that, the scheduler will only come into action during new deployments or after the control plane finds that’s it is missing pods. if the containers keep running it doesn’t do anything.

After a few deployments and scaling of new nodes. The cluster might not be in balance anymore. The pods might be clusters around two of the three nodes. The scheduler has done its job and will not resolve this issue. You are required to check if the cluster is in balance and that certain nodes aren’t busy or are underutilized.

One way to resolve this is to start using Kubernetes Descheduler, this product checks if nodes are underutilized or to busy and selectively starts killing pods. The control plane notes that it’s missing pods in its deployment and triggers the scheduler to pick a new host for new pods which then again looks for the best location for the pods.

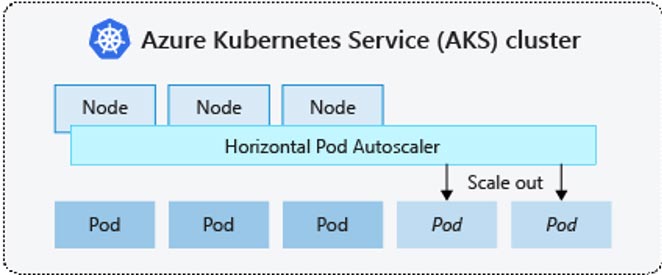

07. Scaling

AKS support scaling on Azure. But the only thing it takes care of is adding extra nodes when AKS requests it. What is doesn’t do is making sure the container workload scales the right way. You need to configure this with horizontal pods autoscaler (HPA). This might result in busy nodes without scaling or no downscaling at all even though the extra compute isn’t required anymore.

Check this for a deep dive on how to configure autoscaling.

Final note

Microsoft has created a great product with AKS and resolves a lot of challenges you would have when bootstrapping your own cluster. It’s however important to note that even though it’s a managed cluster there’s still a lot of management needed from you as a customer. AKS is a hybrid between IaaS and SaaS and requires quite a lot of knowledge to run smoothly.

I would, however, like to state that AKS makes Kubernetes accessible to a large audience and managed Kubernetes is the way to go for every company that’s seriously looking to start their Kubernetes or Container journey.